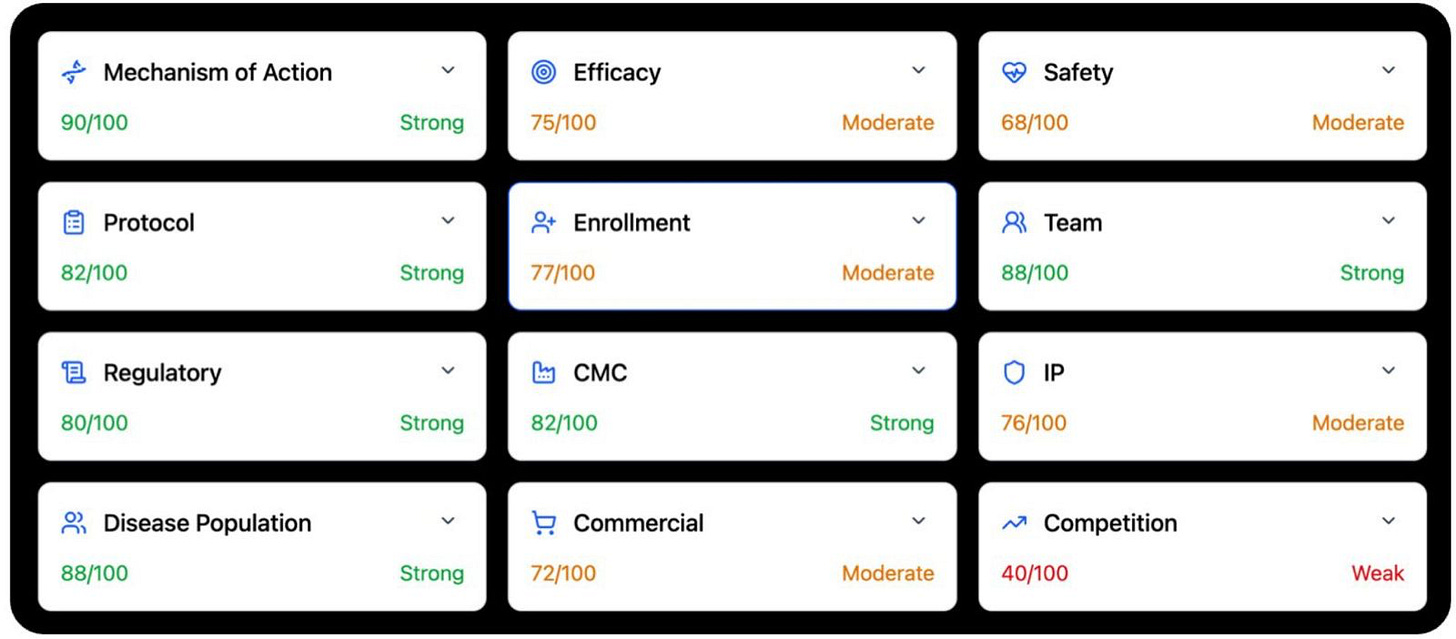

The 12 dimensions of clinical trial intelligence

Biology is not the bottleneck anymore.

Biology is abundant now. Judgment is scarce.

We can generate data at scale. We can sequence almost anything. We can run trials across the world. We can train models on vast corpora of papers, patents, and protocols.

Still, most drugs fail.

Not because we lack information. Because we fail to turn information into judgment.

That is the real problem in drug development. Not data scarcity. Not compute. Judgment.

Biomedical intelligence, whether human or machine, lives or dies on this point. It must work across a stack of decisions that determine whether a therapy succeeds or fails. That stack is not vague. It has shape. It can be named.

It has twelve dimensions.

Each one captures a different layer of reality in life sciences. Together they decide whether a medicine works, gets approved, reaches patients, and creates value. Clinical trial intelligence will not matter until it can handle all twelve. Not one by one. Together.

1. Mechanism of action

This is the base layer. If you do not understand why a drug should work, the rest is built on sand.

A serious system has to start with the facts. What is the trial. Who is the sponsor. What is the intervention. What is the modality. What is the dose. What is the molecular target. What disease is being treated.

But that is just the beginning.

The real task is to move from labels to cause. Not summary. Cause.

You need the human biology of the target. Genetics. Expression. Disease linkage. You need pathway biology. Is the target upstream or downstream. Is it central or peripheral. You need target validation. Knockout. Knockdown. Pharmacologic modulation. You need biomarker evidence in humans that shows the drug is hitting what it claims to hit. And you need history. What else has gone after this target or pathway. What worked. What failed. What failed more than once.

The point is not to gather papers. It is to build a causal case.

This is hard because mechanism is never one question. It is a set of questions. What role does the target actually play in disease. How strong is the human evidence. Is the target a driver, or only a correlate. Are the findings consistent across genetics, cell work, animal work, and pharmacology. Where does the target sit in the pathway. Does that make it powerful, or fragile.

An upstream target may offer more leverage. It may also carry more risk. A downstream target may be safer. It may also matter less.

Then comes translation. Do biomarkers show target engagement in humans. Is there a PK PD relationship. Does biology move with dose.

Then comes class history. Has this kind of target worked before. Has it failed before. Has it failed for the same reason, again and again.

This is why mechanism cannot be a literature review. It has to be a judgment. You are sorting biology into levels of conviction. Well validated. Plausible but unproven. Weak. Speculative. That is the real job.

2. Efficacy

This is where biology meets the clinic.

Once you leave mechanism, the standard gets harder. A good story is no longer enough. Now the question is simple. Did the drug work in humans.

Not in a headline. Not in a press release. In the data.

A real system has to pull the whole record. Phase 1. Phase 2. Phase 3. Posters. Publications. Company updates. Failed studies too. Especially failed studies. It also has to look at similar drugs in the same class, because no efficacy result means much in a vacuum.

The question is not whether the trial sounded positive. The question is whether the signal survives contact with scrutiny.

That means reading the trial like an operator would. What phase was it. Was it randomized. What was the control arm. Was the study powered well. How long was follow up. What were the primary, secondary, and exploratory endpoints.

Then comes interpretation. Was the primary endpoint met. Were the p values and confidence intervals solid. Was multiplicity handled well. Did the result hold up across sensitivity analyses. Was there subgroup consistency, or did the signal depend on slicing the data until something looked good.

Then comes the harder question. Was the effect meaningful. Not statistically nonrandom. Meaningful.

A small effect can be real and still not matter. A large effect can matter even if the dataset is imperfect. This is where weak systems fail. They confuse significance with importance.

You also have to benchmark against standard of care. What do approved drugs achieve in this indication. What endpoints did they win on. What effect sizes were enough to change practice. Without that comparison, you mistake motion for progress.

Efficacy also has a time axis. A fragile signal often looks good once. Then breaks. So you have to ask whether the evidence is consistent across studies, across doses, across populations. Does the clinical effect match the mechanism, or is there a gap between preclinical promise and human reality. Are there signs of fragility. Small N. Post hoc wins. Endpoint switching. Regression to the mean.

Efficacy is not a binary variable. It is a judgment about whether a signal is durable enough to survive the next trial, the next population, and the next round of scrutiny. That is what matters.

3. Safety

Every drug has a cost.

The question is whether the cost is worth paying.

Safety is where many programs break, often quietly. A drug can hit the biology. It can even show efficacy. But if the exposure needed to work is too toxic, the program dies.

That is why safety cannot be treated as a side note. It is its own dimension.

You start with the exposure base. What trial is this. What drug. What dose. What schedule. What route. What phase. How many patients got each dose. For how long. Was the safety evidence generated in healthy volunteers, patients, or both.

Then you build the record. Treatment emergent adverse events. Grade 3 and 4 events. Serious adverse events. Discontinuations. Dose interruptions. Dose reductions. Withdrawals. Deaths. Prior studies matter. Failed studies matter. Related drugs matter. Regulatory warnings matter. Preclinical tox matters when it helps explain clinical risk.

But the real work starts after collection.

How good is the safety database. Is the patient exposure deep enough to say anything with confidence. Is there a maximum tolerated dose. Were there dose limiting toxicities. Is there a dose toxicity relationship. Is the efficacious dose inside a workable window, or beyond it.

Then comes interpretation. Are the toxicities on target or off target. Expected or surprising. Reversible or cumulative. Easy to monitor or hard to catch. Manageable in practice, or only manageable on paper.

You also have to think by organ system. Liver. Heart. QT. CNS. Blood. Immune system. GI. Kidney. Eye. Infection. Then modality specific risks. Gene therapy. Cell therapy. Radiopharma. ADCs. Oligos. Immune activation. Each class has its own ways to fail.

And safety is never absolute. It is always contextual.

A toxicity that is acceptable in late stage oncology may be disqualifying in migraine. A burden that physicians tolerate in a fatal disease may be unacceptable in a chronic one. So you have to benchmark against placebo, active control, and standard of care. Better. Similar. Worse. Acceptable for the disease. Or not.

Then comes the downstream effect. Could the profile trigger a clinical hold. A boxed warning. A REMS. A narrow label. Heavy monitoring. Commercial friction. Could a subgroup risk shrink the usable population.

Safety is not event counting. It is judgment under tradeoff. What risk. At what dose. In what disease. For what benefit. Against what alternatives. That is the real question.

4. Protocol design

A trial is not just an experiment.

It is a claim about what will count as truth.

If the design is weak, the result can be useless even if the drug works. That is why protocol design matters so much. It is where intent becomes structure.

You have to reconstruct the whole trial. Phase. Study design. Intervention. Endpoints. Sample size. Treatment duration. Inclusion and exclusion criteria. That is not clerical work. That is the skeleton of the decision the trial is trying to make.

Then you ask what kind of trial this really is. Signal finding. Dose finding. Proof of concept. Registrational. Supportive. These are not the same. A fuzzy Phase 2 study can still be useful. A fuzzy registrational study is a disaster.

Endpoint choice is everything. Is the primary endpoint clinically meaningful. Is it accepted by regulators. Is it a surrogate that may or may not translate. Has it supported approval before. Are the secondary endpoints aligned, or just noise around the edges. Timing matters too. Measure too early and you miss the effect. Too late and noise creeps in.

Control arm choice is where many weak programs hide. Placebo versus active control is not a minor detail. It shapes whether the result means anything. Randomization matters. Blinding matters. Bias matters.

Population selection matters just as much. A trial can be enriched for signal. That is sometimes smart. But it can also be so optimized that it stops reflecting the real patients who would get the drug later. Then the program wins early and breaks later.

Statistical design decides how fragile the result will be. Sample size. Power assumptions. Effect size assumptions. Multiplicity control. Interim looks. Missing data. Rescue meds. Dropouts. A study can be nominally positive and still not survive real scrutiny if these parts are weak.

And then there is the part people forget. Operations. Visit burden. Biomarker testing. Imaging. Procedures. Complexity at sites. Complexity for patients. The more burden you add, the more ways the data can fail.

In the end, protocol design has one real test. If this trial is positive, will anyone believe it. And will it be enough to move the drug forward.

5. Enrollment

This is where theory meets the world.

It is easy to say a disease has many patients. It is much harder to recruit them.

Enrollment is where many programs lose years. Sometimes they lose the whole program. Not because the science failed. Because the trial could not fill.

You start with scope. How many patients are needed. How many sites. Where are they. What is the timeline. Is the study recruiting. Stalled. Delayed. This tells you how hard the task really is.

Then you turn epidemiology into a funnel. Start with prevalence. Then cut it down. Diagnosed patients. Treated patients. Eligible patients. Biomarker positive patients. Patients willing to join. What looks large on paper often becomes small in practice.

Incidence versus prevalence matters too. Acute diseases recruit differently from chronic ones. Geography matters. Patients may exist, but not near the sites that matter.

Eligibility is often the hidden trap. Tight criteria. Prior line requirements. Biomarker screens. Complex procedures. All of these drive screen failures. Then comes competition. Other trials may be recruiting the same patients from the same sites at the same time. Approved therapies may reduce willingness to randomize. Good sites are finite. Overload them and everything slows.

Site strategy matters. Number of sites. Quality of sites. Academic versus community. Geographic spread. Activation speed. Dependence on a few star centers. These are not side issues. They decide whether the timeline is real.

Benchmarks matter most. Patients per site per month is real. It varies by disease and geography. If your plan depends on enrollment rates above what history supports, your plan is weak, no matter how pretty the slide looks.

And enrollment is never just about speed. Delay changes everything. It burns cash. It shifts readouts. It can turn a first mover into a follower. It can move a promising asset from relevant to late.

Many drugs do not fail on science. They fail because they cannot finish the trial.

6. Team

Drugs are built by people. (For now).

In human-developed pharma, the quality of a team shapes the quality of every decision that follows. Trial design. Regulatory strategy. CMC execution. Fundraising. Hiring. Partnering. Recovery after failure. None of this is random.

You start by mapping the people. CEO. CMO. CSO. Clinical lead. COO. Board. Advisors. Key investigators if they matter. But titles mean little by themselves. What matters is what these people have actually done.

That is where the work gets hard. The data is scattered. You have to connect individuals to prior companies, prior programs, prior outcomes. Approvals. Failures. Exits. Near misses. And you have to separate proximity from ownership. Being present during a successful program is not the same as driving it.

Then you look across a few hard questions. Has this team taken programs from preclinical to IND. From Phase 1 to Phase 2. From Phase 2 to Phase 3. From Phase 3 to approval. Each step has its own traps. Experience in one does not guarantee skill in the next.

Then relevance. Oncology experience does not automatically map to neurology. Small molecule experience does not automatically map to gene therapy. Early stage builders do not always become late stage operators. Commercial talent does not always help in preclinical strategy. Context matters.

Track record matters too. Are these repeat winners. Mixed operators. First timers. Failure is not disqualifying, but it has to be understood. What was their role. What did they learn. What pattern do they show.

Clinical and regulatory strength matter more than most people think. A strong CMO can rescue a program by designing the right trial. A weak one can kill it by getting the basics wrong. The same is true for regulatory leadership.

Then comes completeness. Are all the major functions covered. Clinical. Regulatory. CMC. Finance. Commercial if the stage requires it. Are there obvious holes. Is too much resting on one person. Are the advisors real contributors, or just names on a slide.

Scaling risk is real too. A team that is strong at the seed stage may struggle at Phase 3. A team that knows development may not know launch. Some teams grow with the asset. Some do not.

People are not interchangeable. Good systems need to know that. The probability of success depends, in part, on who is building the drug.

7. Regulatory

Regulatory is not magic.

It is history, made formal.

People often talk about approval as if it were opaque. It is not. Regulators are not improvising. They are applying precedent. Every approval teaches you something about the boundary of what is acceptable.

So you have to map that boundary. What drugs were approved in this indication. On what endpoints. In what populations. With what trial designs. Under what safety burden. Were the endpoints hard outcomes or surrogates. If they were surrogates, how established were they.

These are not background details. They are the rules of the game.

Then you compare. Does the current program align with that history, or drift away from it. If it drifts, is that a smart innovation or a dangerous gamble. An endpoint that has never supported approval may be visionary. It may also be dead on arrival. A trial design that departs from prior registrational standards may produce positive data that no agency wants to trust.

Alignment matters more than novelty here.

You also have to read the softer signals. Agency interactions matter, even when only partly disclosed. End of Phase 2 meetings matter. Designations matter too. Breakthrough Therapy. Fast Track. Orphan. RMAT. These are not just badges. They tell you how the agency sees the asset, the unmet need, and the path ahead.

Safety tolerance sits inside regulatory logic. Different diseases allow different levels of risk. Oncology is not obesity. Rare fatal disease is not chronic primary care. A system has to internalize those thresholds and measure the program against them.

And you have to think in failure modes. Could this end in a complete response letter. A demand for another trial. Rejection of the endpoint. Rejection of the effect size. Delay due to safety. Restriction to a narrow label.

Regulatory risk is rarely random. It is patterned. If you can model the pattern, you can often see the outcome before the meeting happens.

8. Manufacturing

A drug that cannot be made is not a drug.

That is the blunt truth of CMC.

This is where biology meets physics. It is where elegant science collides with yield, purity, consistency, storage, and scale. Many programs that look strong in papers break here.

Start with the modality. Small molecules. Biologics. Cell therapies. Gene therapies. RNA drugs. ADCs. They do not share the same manufacturing world. Some are robust. Some are fragile. Some are cheap to scale. Some are hard to make even once.

Then ask how mature the process is. Early processes move. Conditions shift. Methods evolve. Yields drift. Late stage processes should be locked, reproducible, and validated. The transition between those states is one of the most dangerous moments in development.

Scale is the next trap. A process that works in a lab may fail in a plant. Small batch success does not guarantee commercial success. At scale, throughput matters. Batch consistency matters. Tiny variations can change the product, especially in complex modalities.

Then comes change over time. If the process evolves between early and late trials, the product may not be the same. That creates comparability risk. You may end up with clinical data on one version and a commercial plan for another.

Quality systems decide whether the product can be trusted. Analytical methods. Release specs. Batch variability. Stability. Shelf life. Cold chain. Handling. A product that degrades too fast or varies too much is a problem no matter how strong the efficacy data is.

Supply chain matters more than people admit. Single source dependencies. Rare raw materials. Geographic concentration. Fragile vendors. All of these can break a program at the wrong time.

Cost matters too. A drug can work and still fail if it is too expensive to make. Cost of goods shapes margins, access, and pricing power.

CMC is not a box to check. It is a map of whether the product can exist in the real world. Reliable. Scalable. Economic. That is the test.

9. Intellectual property

Science alone does not create durable value.

If you cannot defend the asset, someone else will take the margin.

That is why IP matters so much. Not as legal decoration. As economic reality.

The first question is the strongest one. Do you own the molecule, or the construct. Composition of matter is the core. If that protection is broad and durable, it creates a real moat. If it is narrow or absent, competitors may be able to walk around it.

Then comes breadth. Does the protection extend across indications, formulations, dosing regimens, combinations, manufacturing methods. Strong portfolios do not rely on one claim. They layer protection. Weak ones leave doors open.

Time is as important as breadth. Patent life is finite. What matters is not the filing date alone, but how much runway remains at expected approval. A therapy that arrives with little protection left is in a very different position from one with a long exclusivity tail.

Then there is freedom to operate. A company can have patents and still be boxed in by someone else’s claims. Overlap matters. Blocking patents matter. Licenses matter. Settlements matter. You have to map the landscape, not just admire your own fence.

Litigation is part of the story too. Patents get challenged. Some collapse. Some survive. The likelihood of challenge, and the strength of the claims under pressure, matter a great deal.

And then there is practical defensibility. Even if the paper protection is there, how hard is it for a competitor to build something close enough to compete. Can they tweak the structure. Can they design around formulation. Can they enter with a biosimilar path.

IP is not static. It is a living system that decides how long an advantage can hold. Good biomedical intelligence has to read it with the same rigor it brings to clinical data.

10. Disease population and market size

This is where biology meets scale.

A drug may work. The next question is how many people it can really help, and what that is worth.

It starts with epidemiology. Incidence. Prevalence. Growth. But top line numbers mislead. The real work is in the cuts that come after.

How many patients are diagnosed. How many are treated. How many fit the label. How many meet the biomarker. How many reach the right line of therapy. Every filter shrinks the pool. What looks huge at first often becomes modest by the time you reach the true addressable population.

Segmentation matters. Diseases are not one thing. They have subtypes. Severity tiers. Biomarker subsets. Early line and late line use. A refractory niche is not a frontline market. A broad chronic disease is not the same as a narrowly defined genetic subset.

Geography matters too. Diagnosis rates differ. Treatment rates differ. Access differs. Reimbursement differs. A global number can hide a very uneven reality.

Growth matters. Some diseases expand because populations age, screening improves, or diagnosis gets better. Others do not. You cannot treat the market as static if it is moving under you.

Then comes the hard translation. Patient numbers into revenue. That means price. Penetration. Duration of therapy. Access. Those assumptions have to be explicit, because they drive the whole model. Small changes in uptake or pricing can swing the result far more than people expect.

This looks like math. It is really assumption management.

Most market models fail not because the arithmetic is wrong. Because the hidden assumptions are weak. Good systems make those assumptions visible and test how much they matter.

11. Commercial reality

Approval is not adoption.

Many approved drugs still fail. They fail because the world does not behave the way the slide deck said it would.

Commercial reality starts with differentiation. Is the drug clearly better than standard of care. Better efficacy. Better safety. Better convenience. Easier dosing. Easier administration. If the answer is only marginally yes, behavior may not change.

And behavior is the whole game.

Physicians have habits. Patients have fears. Payers have budgets. Hospitals have workflow. A product enters all of that at once.

Pricing is one part of it. A company may believe the clinical value supports a premium price. Payers may disagree. Then come step edits. Prior auth. Restricted access. Narrow coverage. Cost effectiveness thresholds. These are not downstream annoyances. They shape uptake from day one.

Practical friction matters just as much. Does the drug require biomarker testing. Does it need infusion centers. Is the dosing schedule awkward. Is monitoring intense. These things matter in the clinic far more than many models admit.

You also need launch benchmarks. What happened when similar drugs launched. How fast did they grow. Where did adoption stall. What access barriers appeared. Without that history, forecasts tend to become wishful thinking.

Competition changes the commercial picture too. A drug entering open space is one thing. A late entrant with modest differentiation is another. First is not always best. Best is not always enough. But timing always matters.

At the core, this dimension is about incentives and behavior. Physicians, patients, and payers each respond to different pressures. Any system that ignores that will overestimate uptake.

Commercial success is not just clinical value. It is clinical value filtered through the real world.

12. Competition

No drug exists alone.

Every program lives inside a moving field of other programs. Approved drugs. Late stage challengers. Early stage threats. Same mechanism. Different mechanism. Better safety. Better timing. Better convenience. All of it matters.

So the first job is mapping the field. What is standard of care. What is approved. What is in Phase 1, 2, and 3. What mechanisms are in play. What modalities. What patient groups. What readouts are coming.

But mapping is not enough. You have to compare.

Direct competitors matter most when they hit the same pathway. That is where class effects emerge. One program’s failure can poison the well. One program’s success can validate the whole area.

Indirect competitors matter too. A new modality can make the old frame obsolete. Gene therapy can reset the bar for chronic drugs. A safer oral can damage an infusion franchise. A different mechanism can win if it solves the problem better.

Timing is brutal here. Who reads out first. Who reaches the market first. Who comes later with better data. A program that looks strong today can be leapfrogged before launch.

So differentiation has to be judged in context. Better efficacy. Better safety. Better convenience. Something that matters. If the difference is thin, the drug risks becoming a me too product in a crowded field. If the difference is real, it may become best in class. But that judgment cannot be static. The field keeps moving.

Market structure matters too. Some spaces can support many winners. Others collapse into a few dominant players. You need to know which kind of market you are in.

History helps. Every therapeutic area has a pattern. Some mechanisms keep working. Others keep disappointing. Some strategies win early and hold. Others get overtaken. A good system learns those patterns and uses them.

Competition changes the meaning of everything else. Strong data may not be enough if someone else is stronger. Middling data may still win if the field is weak. Nothing is judged in isolation.

That is why competition is the final dimension. It turns every absolute into a relative one.

Why these twelve matter together

What makes these dimensions powerful is not just what each one says. It is how they interact.

A strong mechanism can be ruined by weak protocol design. Good efficacy can be capped by safety. A well run program can still lose on enrollment. A good drug can fail in the market. A strong asset can be boxed in by weak IP. A promising program can be overtaken by competition.

This is the real challenge for clinical trial intelligence.

It is not enough to retrieve papers and summarize them. The system has to do four harder things. It has to retrieve the right primary data. It has to structure and compare that data. It has to judge what matters. And it has to develop taste.

That last part matters most.

Retrieval is getting solved. Analysis is getting solved.

Judgment is not.

Taste barely exists.

And taste is what tells you when a result feels brittle. When a protocol is optimized to win. When a management team is out of its depth. When a safety signal will widen. When a market model is built on fantasy. When a program that looks strong on paper is weaker than it seems.

That is why current systems still fail in high stakes biomedical work. They can find. They can summarize. They can rank. They still struggle to know.

The path forward is not just bigger models. It is better framing.

These twelve dimensions offer one such frame. They define the major decisions that shape drug development. They force the right questions. They create structure where people often rely on instinct alone.

Miss one dimension and the program breaks.

Not always at once. Not always loudly. But predictably.

Capture all twelve, and you begin to see the system the way the best drug developers do.

That is the bar.